|

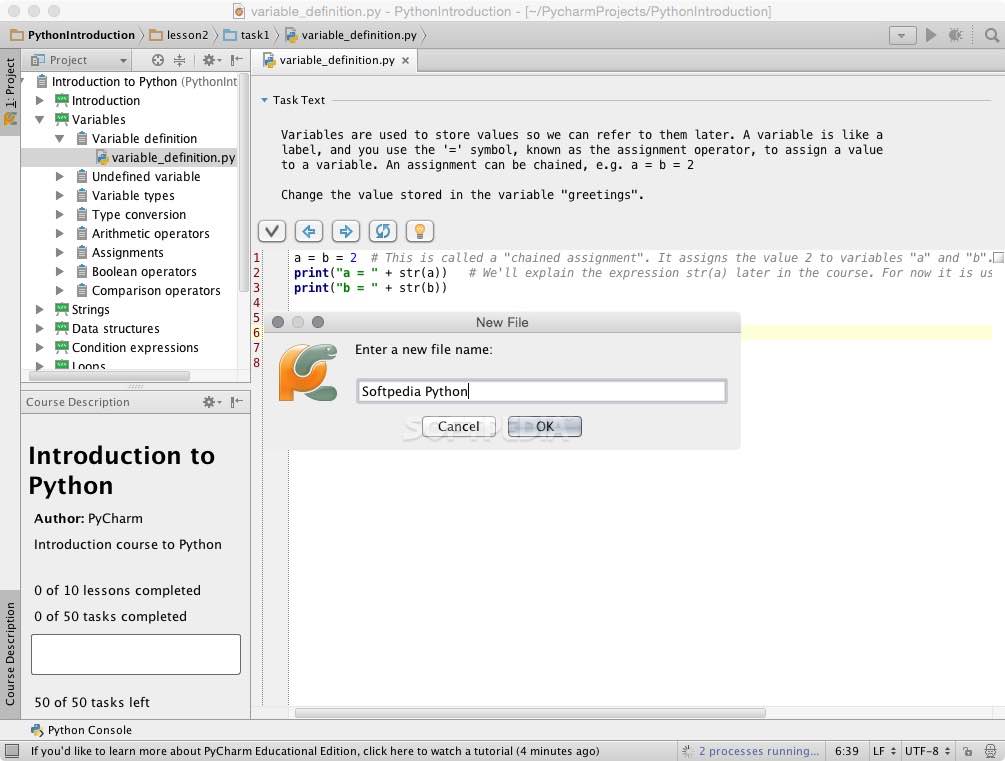

Here is what happened when you executed the command: Enter the following command to start the scraper:ĭepending on the library version of Scrapy that you installed, you should see an output that is something like the following:Īs you can see, the output is quite long so we just picked some parts. Scrapy comes with its own command-line interface to help in starting a scraper. Another option is Scrapy’s command-line interface. Another option is following the typical way of running Python files from the command line: python path/to/file.py, or py path/to/file.py. If you are using an IDE, for example, the P圜harm community edition from JetBrains, it probably comes with a button that you can just click to run the script. Opening this URL will take you to the CloudSigma blog page 1 that contains some of the many tutorials. Then, we provide a single URL to start from. First, we name our spider cloudsigma_crawler. In our CloudSigmaCrawler, we define the required attributes. To understand more on subclassing and extending, read on Object-Oriented programming principles. By extending it, we can provide the required information to the methods. However, it doesn’t know which URLs to follow or what data to extract. In this case, the Spider class has methods and behaviors that define how to follow URLs and extract data from web pages.

By extending a Class(Spider), we get access to the Class’ properties which we can now use in our code. In the next line, we extend the Spider class provided by Scrapy and create a subclass called CloudSigmaCrawler. The first line imports Scrapy, allowing us to use the various classes that the package provides. Step 1: How to Build a Simple Web Scraperįirst, to install Scrapy, run the following command:īelow is an explanation of each line of code: When you install and set up Python 3 on your local development environment, it installs pip too, which you can use to install Python packages. PyPi is a community-owned repository that hosts most Python packages. Scrapy is available from PyPi, commonly known as pip – the Python Package Index. With Scrapy, the process of building a scraper becomes easy and fun. This way you don’t have to reinvent the wheel whenever you want to implement a web crawler. Scrapy was built to handle some of the common functionalities that all scrapers should have. Scrapy is an open-source tool and one of the most popular and powerful Python web scraping libraries. We will be using Scrapy, a Python library, together with Python 3 to implement the web scraper in this tutorial. While some may appreciate the challenge of building their own web scraper from scratch, it’s better if you do not reinvent the wheel and rather build it on top of an existing library that handles all those issues. It may be quite a heavy task figuring out how to transform your scraped data between different formats such as CSV, XML, or JSON. However, this may bring issues in the future when your web scraper becomes complex, or when you need to crawl multiple pages with different settings and patterns at a time. There are a number of ways and libraries that can be used to build a web scraper from scratch in many programming languages. Web scraping involves two steps: the first step is finding and downloading web pages, the second step is crawling through and extracting information from those web pages.

First, you can refer to our tutorial on how to install Python 3 and set up a local programming environment on Ubuntu. This is a hands-on tutorial, so you should have a local development environment for Python 3 to follow along well. This should be fun, let’s begin! Prerequisites Using the knowledge from creating this basic web scraper, you can expand on it and create your own web scrapers. By the time you are reading this tutorial’s conclusion, you will be having a functioning web scraper made with Python 3 that crawls several pages on our blog section, then displays the data on your screen. We will try to get information about the tutorials on our blog page. For the practical example, we will be using our CloudSigma blog.

You should be able to follow along with the tutorial regardless of your level of programming expertise. If you are wondering how you can go about web crawling, we will be showing you the basics of web scraping through a simple data set. These data, which is usually large sets of text can be used for analytical purposes, to understand products, or to satisfy one’s curiosity about a certain web page. Web scrapers or web crawlers are tools that go over web pages programmatically extracting the required data. Web scraping, web crawling, web harvesting, or web data extraction are synonyms referring to the act of mining data from web pages across the Internet.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed